Rather than simply reprinting the results (which can be downloaded by clicking on the hyperlink in this page), I thought I’d also have a look at the results themselves, and see what comment, if any, I could furnish.

Right, then.

(Awesome image found here)

(Awesome image found here)The questionnaire, which was conducted for 2 weeks, was sent out to some 40,000 researchers, all of whom were published authors. Of this 40,000, 4,037 (10%) completed the survey. Of that 10%, 89% classed themselves as ‘reviewers’.

Of course, my first question at this point is whether this is representative of researchers as a whole…Do 89% of all (published) researchers review? Or was there some intrinisc bias towards the subject that meant that people who review were more likely to complete the questionnaire (and hence skew the findings…)

Moving on.

Satisfaction

In general, respondents (not reviewers) were fairly happy with peer review as a system - 61% were satisfied (although only 8% were very satisfied) with the process, and only 9% were dissatisfied. I would dearly loved to have been able to organise a discussion or two around that latter 9% or so, to figure out why (which is qualitative research is so important).

Reviewers generally felt that, although they enjoyed reviewing and would continue to do it, that there was a lack of guidance on how to review papers, and that some formal training would improve the quality of reviews. There was also the strong feeling that technology had made it easier to review than 5 years ago.

Improvement

Of those who agreed that the review process had improved their last paper (83% of respondents), 91% felt that the biggest area of improvement had been in the discussion, while only half felt that their paper’s statistics had benefited. Of course, and as the survey rightly points out, this may because this includes those whose papers contained no such fiddlies…

Why review?

Happily, most reviewers (as opposed to respondents, which includes reviewers and the leftover authors) reviewed because they enjoyed playing theire part in the scientific community (90%) and because they enjoyed being able to improve a paper (85%). Very few (16%) did it as a result of hoping to increase future chances of their paper being accepted.

[On that point - is that last thought/stratagem valid? Does reviewing improve one’s chances of paper acceptance in any way?]

Amongst reviewers, just over half (51%) felt that payment in kind by the journal would make them more likely to review for a journal - 41% wanted actual money. (then again, 43% didn’t care). 40% also thought that acknowledgment by the journal would be nice, which is odd considering that the majority of reviewers favour the double blind system (see below), or even more strangely, the fact that 58% thought their report being published with the paper would disincentivise them, and 45% felt the same way about their names being published with the paper, as a reviewer. [Note:in the latter point, for example, that 45% does not mean that 55% would be incentivised - a large proportion of the remaining 55% is in fact taken up by ambivalence towards the matter]

Amongst those who wanted payment, the vast majority wanted it either from the funding body (65%) or from the publisher (94%). In a lovely case of either solidarity or self-interest, very few (16%) thought that the author should pay the fee. Looking at that 94% of authors who would want to be paid by the journals to review - where would this leave the open access movement? One of the major, and possibly most valid, criticisms currently being leveled against paid subscription journals is that their reviewing is done free of charge. Indeed, it might even lead to a rise in subscription prices, meaning even more researchers are unable to access paid content. Hmmmm.

To review, or not to review

The most-cited reasons for not reviewing were that the paper was outside their area of expertise (58%) or that they were too busy with their own work (30%). Of course, the first point does suggest that journals could, and should, do a better job of identifying which papers should go to whom…Certainly, this would be a brilliant place for a peer-review agency to start!

The mean number of invitation to review rejections over the last year was 2, but a little a third of respondents would be happy to review 3-5 a year, and a further third 6-10! Which suggests a great deal of underutilisation of the resources, especially considering that the primary reason for not accepting an invitation to review is that the reviewer and subject weren’t properly matched.

Purpose

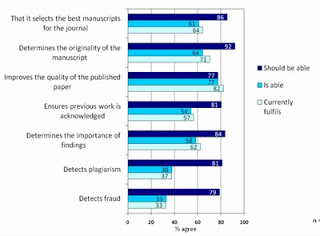

For this, I’d suggest the best thing to do is have a look at the graph below (which is from the report, again to be found here).

(c) Sense about Science, Peer Review Survey 2009: Preliminary Findings, September 2009, UK. Click on image to enlarge and make more legible.

(c) Sense about Science, Peer Review Survey 2009: Preliminary Findings, September 2009, UK. Click on image to enlarge and make more legible.What is interesting is that peer review is underperforming significantly on some of the functions felt to be most important, namely the identification of the best manuscripts for the journal and originality (assuming that by this they mean something along the lines of ‘completely novel and ground breaking’, then there’s a very interesting discussion to be had around that, considering that PLoS specifically doesn’t look to that, something I talk about in this post).

It’s also performing poorly at the detection of plagiarism and fraud detection, although one wonders whether that is a realistic expectation (and, certainly, something that technology could perhaps be better harnessed to do).

Types of review

Most (76%) of reviewers felt that the double-blind system currently used is the most effective system

-

usage stats were thought effective by the fewest reviewers (15%).

Length of process

Gosh. This was interesting. So, despite the fact that some 75% or reviewers had spent no more than 10 hours on their last review, and 86% had returned it within a month of acceptance of invitation, 44% got first decision on the last paper they had submitted within the last 1-2 months, and a further 35% waited between 2 and 6+ months.

Of course, the waiting time for final acceptance scales up appropriately, as revision stages took 71% of respondents between 2 weeks and 2 months. So final acceptance for took 3-6 months for a third of respondents, and for a further third, could take anything between that and 6+ months.

Now, I can understand that the process is lengthy, but it does seem like there’s a fair amount of slack built into the system, and it must have something of an impact on the pace of science, particularly in fast-moving fields. I don’t have an answer, but Nature is just about to release some interesting papers discussing whether scientists should release their data before publication, in order to try prevent blockages…(UPDATE: Nature’s special issue can be found here).

It’s worth mentioning, despite whatever I may say, that slightly more respondents thought peer review lengths were acceptable (or better) than not. Comment, anyone?

Collaboration

The vast majority of reviewers (89%) had done the review by themselves, without the involvement of junior member of their research group, etc. While I can understand this, to some extent, I wonder whether it doesn’t inform the feeling that some sort of training would be useful. Could a lot of that not be provided by involving younger scientists in the process?

Final details

Just over half (55%) of respondents have published more than 21 articles in their career, with 11% having published over 100. Not much comment there from me, other than O_o!!

89% of respondents had reviewed at least one article in the last year (already commented on this, above).

For those interested, most respondents were male,over 36 years old, and worked at universities or colleges.

While half worked in Europe or the US, 26% worked in Asia (to be honest, I was pleasantly surprised that the skew towards the western world wasn’t larger), and the most well-represented fields were the biological sciences and medicine/health.

So yes! there you have it - a writeup that’s a little longer than the exec summary, but a little shorter than the prelim findings themselves.

It does raise a number of interesting questions, particularly around the role of journals in the process, but it also confirms what I think we’ve all heard - that while science publishing is going through some interesting times, very few would dispute that peer review itself is anything other than very important to the process. Although it might need to do a little bit of changing if it’s to remain as important as it is currently…