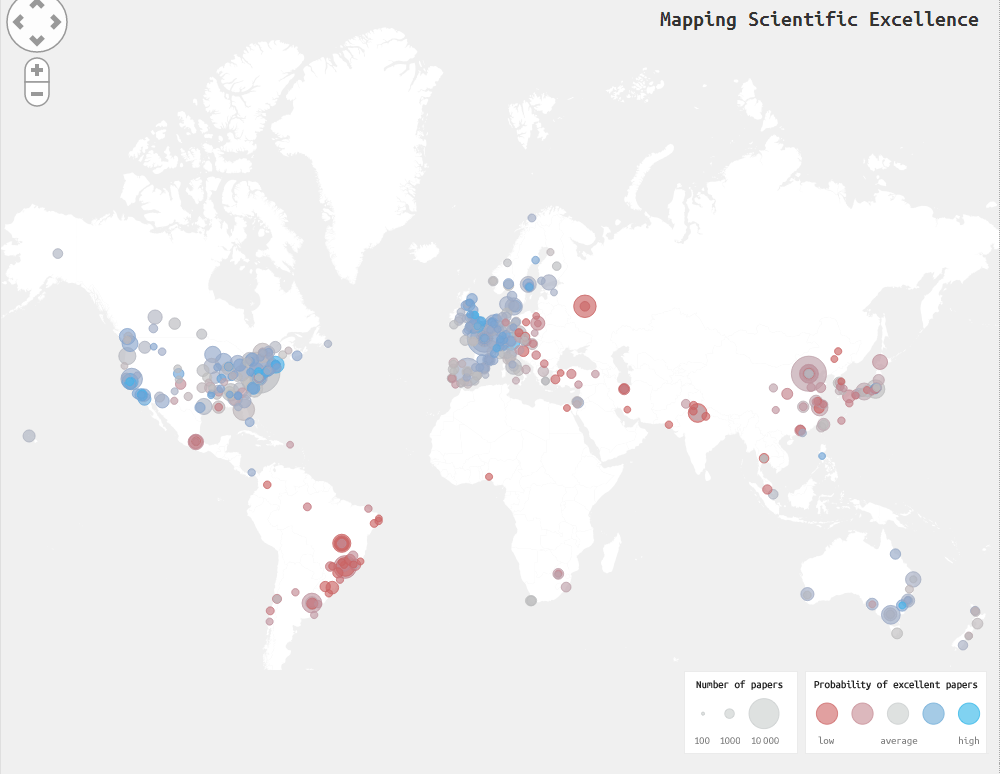

Introducing Mapping Scientific Excellence - a new, interactive web app/map which ranks scientific institutions around the world based on citations (more on that shortly).

The app was designed by Lutz Bornmann and colleagues at Germany’s Max Planck Society, and looks at institutions around the world, ranking them in 17 subject areas and according to according to the rate at which they produce high-quality (frequently cited, in this case) scientific papers. Bornmann and co say it’s part of a new a new evaulation approach - spatial scientometrics - which looks at organisations in terms of excellence and geography.

How did they do it? Well, they used publication and citation data gathered from Scopus (Elsevier), described on SciVerse as “the world’s largest abstract and citation database of peer-reviewed literature”. The app counts the number of papers produced by an institution in one of these subject areas, and then counts the number of these that are in the top 10% of most highly cited. If more than 10% of an institution’s papers fit into this ‘excellent’ category, it gets a positive rating. If less than 10%, it gets a negative rating.

Agricultural and Biological Sciences (note: the ‘Only statistically significant results’ box is something I left unticked, here and for the results below). That bright blue little dot in eastern Australia? That’s the Australian Research Council. Click to enlarge.

Naturally, I was curious about New Zealand, and so I took a closer look…

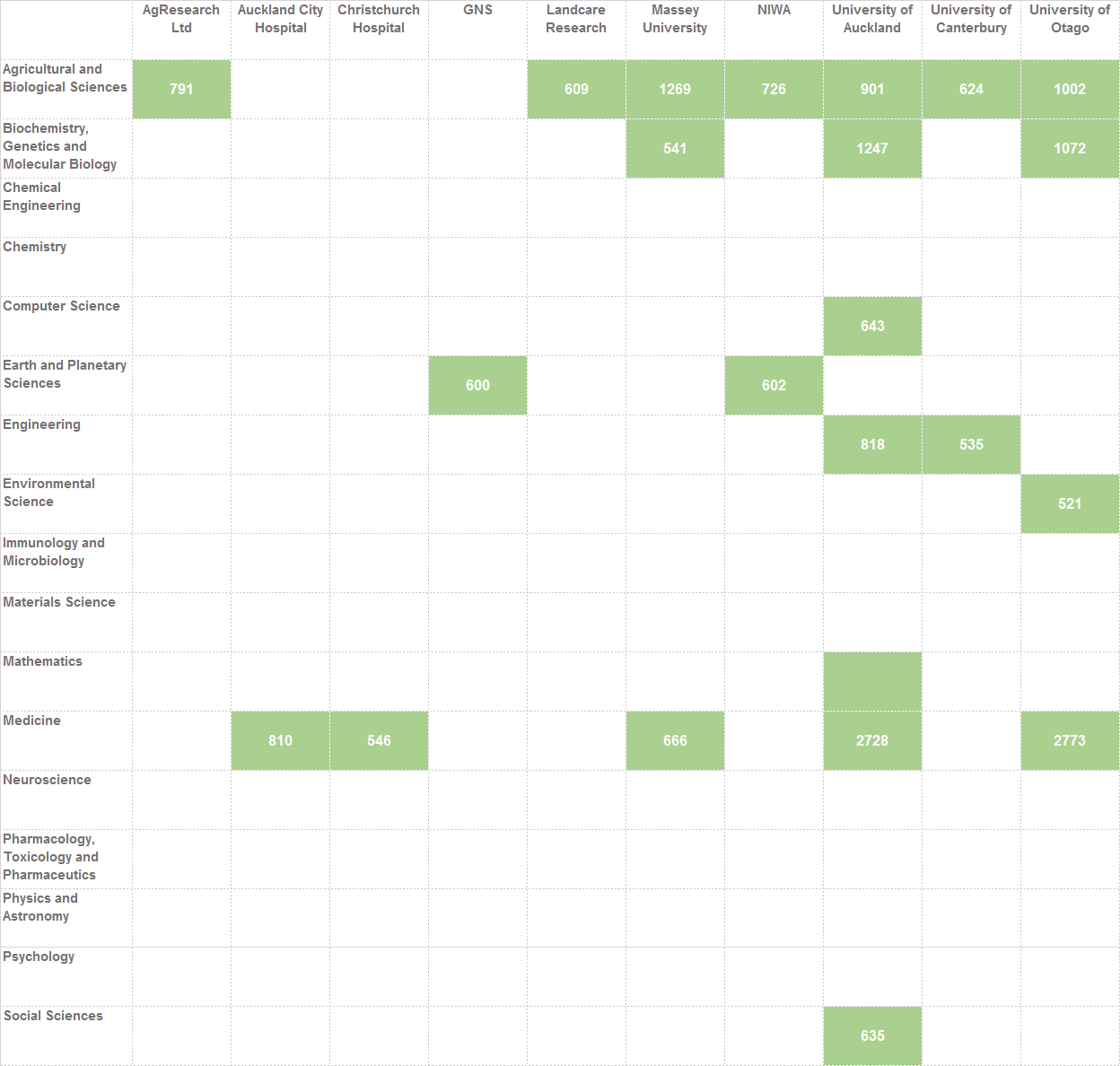

Which NZ institutions were included?

- AgResearch Ltd

- Auckland City Hospital

- Christchurch Hospital

- GNS

- Landcare Research

- Massey University

- NIWA

- University of Auckland

- University of Canterbury

- University of Otago

Why these? Well, “only institutions…that have published at least 500 articles, reviews and conference papers in the period 2005 to 2009 in a certain Scopus subject area” were considered.

How did we do?

Now, this is where things get interesting. It doesn’t look like one can look at overall results (i.e. across all 17 subject areas), but I _could_ do a very quick little comparison to see which NZ institutions were counted/appeared in which categories. Here’s what I found…

The numbers in the blocks are the number of papers. I’m afraid there were only a couple of instances of an above-average ‘probability of excellent papers’, and the margins were pretty slim on those.

Click to enlarge

Conclusion?

Of course, ranking citations isn’t only way of measuring scientific excellence, and the focus on citations as an indicator for quality is under increasing fire for a number of reasons - I’ve even heard it described as a ‘perverse incentive’ which does as much, or more, harm than good for science. Still, I think this is still a useful, and interesting, indicative tool for tracking who’s doing what where.

And, of course, it’s a brilliant example of the huge power of data visualisation, to take large datasets and present them in a way that’s not only easy to understand, but which allows new, sometimes surprising insights to be generated very quickly. For example, two of the top three institutions in physics and astronomy are Spanish; Partners Healthcare System, a non-profit healthcare organisation based in Boston that funds research (mostly life-science based) ranks above MIT and Harvard; the list abounds .

As the MIT Technology Review puts it:

Of course, every ranking system has its advantages and disadvantages. In this case, the ability to rank places by discipline and location is hugely useful.

But there are disadvantages too. Citations take no account of the relative amount of work of different authors. Nor does the ranking method distinguish between papers that are cited because they are excellent and those that are cited because they are not.

Some questions which jumped out at me, from an NZ perspective, include:

- Why aren’t some of our other universities and science organisations represented here? Are there specific policies in places about the journals in which they publish?

- Is it sufficient for those which are represented, to be in the ‘average’ pool for the probability of producing excellent papers?

- Will something like this, which shows very clear hotspots of good (and not so good) science affect where people choose to study, for example? And how are organisations going to react to something like this?

Anyway, go over. Have a play :)

References

If you’re curious, check out the site, and you can read the entire paper over at arXiv: “Ranking and mapping of universities and research-focused institutions worldwide based on highly-cited papers: A visualization of results from multi-level models.”

[H/T io9, MIT Technology Review]

Related posts

Pingback: Scientific collaboration between researchers - map | misc.ience()

Pingback: Scientific collaboration between researchers – map | misc.ience()